AI: cognitive labor glut + new guys

Why is the advent of AI a big deal, and more worrying than previous advents?

I think there are actually two interesting things going on, that make AI importantly different to previous technologies.

I. Industrializing the cognitive labor supply

I.a. Scale: the incoming ocean of new cognitive labor

Until lately, useful cognitive labor has basically come from human brains. This has made it slow to scale up, and expensive to use.

AI is the industrialization of cognitive labor.

Soon we will have very much more cognitive labor available, and much cheaper.

Since cognitive labor is a kind of resource that humans use heavily for everything—from navigating coffee tables to navigating corporate takeovers—this will probably be revolutionary. It will be like that time we moved from picking plants we could find to growing them intentionally. We have been picking thoughts we could find, growing wild in human minds, and now we are firing up the thought factories.

Increasing cognitive labor isn’t new—we have increased the human population, and we have constantly been increasing effective cognitive labor by augmenting human brains with concepts and tools. But this is all slow and clumsy. And increasing the population increases demand for cognitive labor as well as supply. Augmenting human brains retains a heavy bottleneck on human brains. Maybe nothing here is conceptually new, but quantitative differences matter. On the current path, we will soon be inundated with non-human cognitive labor. (We are already starting to get quite damp.)

I.b. Distribution: who controls the cognitive labor?

Until lately, because cognitive labor is intimately connected to human brains, it has been relatively evenly distributed. Every person has a birthright of one brain’s worth of thinking. They regularly have to sell a good chunk of it to live, and a CEO of a large company might indirectly control the output of a million times as much. Which might seem like a big discrepancy. But the fact that people usually keep a fraction of their cognitive labor for themselves (if only because it’s hard to sell it all), and that cognitive labor retained by laborers makes up a large chunk of cognitive labor in the world seems potentially important. People use their own cognitive labor partly to think about their political interests and learn about the world critically and negotiate and act on their own behalf to a non-negligible degree, which will be harder if there is a vastly larger amount of cognitive labor stacked against them in each of these areas. It’s hard at the moment to figure out what’s going on and what to do about it, with everyday people pitted against professional political spin among other forces. It will be worse if the forces of manipulation have access to piles of cognitive labor that normal people have no chance against.

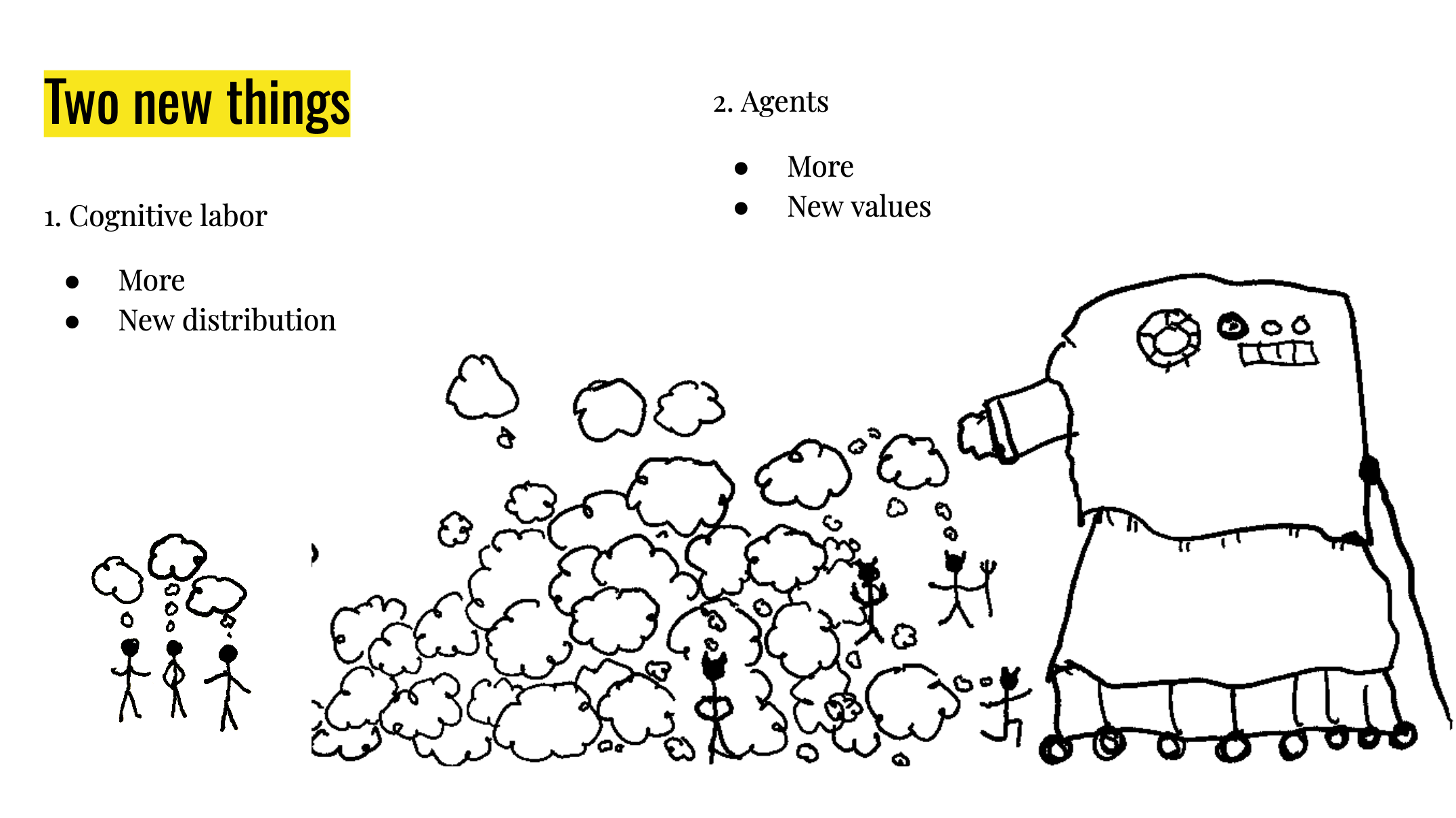

II. New guys

Cognitive labor doesn’t just float around on its own—when it comes from human minds, each bit is controlled by a specific person who has their own goals and interests and goes around in the world acting to forward these. In jargon-land I might call this an ‘agent’, but here I’m going to call it a (gender neutral) ‘guy’. Cognitive labor can be produced in a non-guy format: various tools effectively think, but probably don’t have their own agenda, don’t understand the world richly enough to act in it, can’t act independently.

However with AI, it looks like we are going to make more guys. We see people scrambling to turn AI into guy-format as ‘AI agents’, and this makes a lot of sense theoretically: AI guys are a lot more economically valuable than a bundle of tools that need to be wielded by a human guy.

II.a. Scale: The incoming horde of new guys

Until now, the only way to make new guys was via human procreation (at least strategically relevant guys—dogs are a kind of guy, but one commanding so little cognitive labor that they do not change the situation much for humans). This means the number of guys in the world can’t change extremely rapidly.

The number of guys in a particular place or society can change rapidly, and historically a large influx of new guys entering your society has been an issue of great concern.

AI will mean new guys can be spun up trivially, and since there are all kinds of uses for them, we can expect a massive explosion of guys like we have never before seen (for strategically relevant guys).

II.b. Character: the new guys are alien and have unknown values

Until now, any new relevant guys created were human, and therefore had a lot in common with other humans. For the advanced AI we are set to create on the current path, we do not know how its mind works very well or what its values are, or how different they are from human values. We don’t know what they would do with the world, if they had freedom to do it without humans disempowering them if displeased.

These two things, and four sub-things, would each be a big deal on their own, but in my opinion probably would not constitute an extinction risk. An ocean of new cognitive labor on its own seems actively great. A more unequal distribution of cognitive labor seems bad, but not fatal. Getting all of these changes at once is very unfortunate: the new ocean of cognitive labor, newly able to be distributed very unequally, seems likely to mostly fall in the hands of the new guys, at the same time as the new guys do not have values that are conducive to a long future of human flourishing, or any other particular thing we wish for in future.

(Pictures by me, from my 2023 EAG talk, where I also covered these thoughts.)