-

Why are people so scared of causing fear?

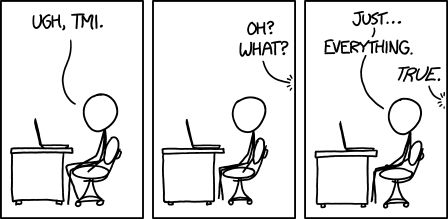

An odd aspect of discussing serious threats is the amount of concern people express about you causing other people to be concerned. This kind of makes sense for interlocutors who don’t believe in the threat itself, or think it is overblown (though in that case it is perhaps strange to focus on altruistic concern for potential frightened onlookers rather than the object-level disagreement). But often the person is not actually disputing the threat, they purportedly just want to protect the public from fear, or avoid causing ‘panic’.

A memorable case: on what was to be one of the last normal weekends in 2020, I took an Uber with a friend to an event. On the way we discussed the rising warnings of an international pandemic and our preparations. But my friend wanted us to talk more discreetly, lest we scare the Uber driver.

Why on Earth would it be bad to scare the Uber driver? My friend believed as much as I did that a real and deadly virus was spreading and there was an imminent risk of this affecting us all, including the Uber driver. Didn’t the Uber driver have an interest in knowing about it? Wasn’t it, if anything, our responsibility to tell the Uber driver? Is the concern that, as a normal person, the Uber driver is incompetent to manage himself, and will just scream and run around or buy poorly selected prepper equipment?

In conversations about AI risk, I sometimes see the same thing. Geoffrey Hinton says he thinks there’s a 10-20% chance of human extinction, and some people seem genuinely most concerned is that maybe the press didn’t add enough disclaimers about the process by which he reached that number, and the public may get unnecessarily worried. I agree it would be non-ideal if people were 20%-level worried when they would only endorse being 7% worried on further methodological inspection. But among non-ideal aspects of a situation where most of the relevant scientists believe their field is heading toward a modest-to-strong shot at killing us, it’s interesting to rate “maybe people will be too concerned” as a top concern.

What is going on? In this particular case, I could imagine behaving this way if the original communication seemed dishonest. But finding this dishonest seems similar to complaining if someone yells “fire!” that they should have yelled “I subjectively guess that it’s highly likely there’s a fire because of the smoke and flames but I’m not an expert”. And it seems like there’s something else going on with wanting that.

A related phenomenon: people casually mention ‘causing a panic’ as a thing that is assumed to be too terrible. Like, yes there’s some upside to warning people that there is a major threat to their lives that they can do something about. Maybe doing that will help stop the world from ending. But! What if they get all emotional? They may not act in the most clear-eyed and rational way. They may talk to each other in epistemically unvirtuous fashions and get even more concerned. They may buy too much toilet paper or run on a perfectly functional bank or protest for poorly designed policies.

I mean, indeed this is all worse than them addressing threats in the most rational and optimal way. But how is it a problem that even ranks compared to them not addressing threats because they don’t know about them? And who are you to not tell people about genuine risks to them that they would act on, to protect their feelings or because they would be more upset than you want?

-

Women should be able to open things

m pretty annoyed today, for nominal reasons ranging between ‘petty’ and ‘doesn’t even make sense’. I’m not entirely sure how or if to take oneself seriously when one has such absurd grievances. But that’s a question for another time—I’m here now to tell you about my one potentially valid peeve.

I understand that gender is complicated and difficult, for the whole species (and honestly probably more so for some other species). And it can be hard to tell exactly if anyone is behaving badly regarding it, at least in my modern bubble. Maybe women just aren’t that into designing programming languages? Maybe the thing I’m saying is just boring and a man is saying a more interesting thing?

But a thing that is undeniable is that women want to open jars, dammit! What’s your nuanced explanation there, Bonne Maman? Does the proper amount of friction for maintaining spread safety fall just between the male and female human grip strength distributions?

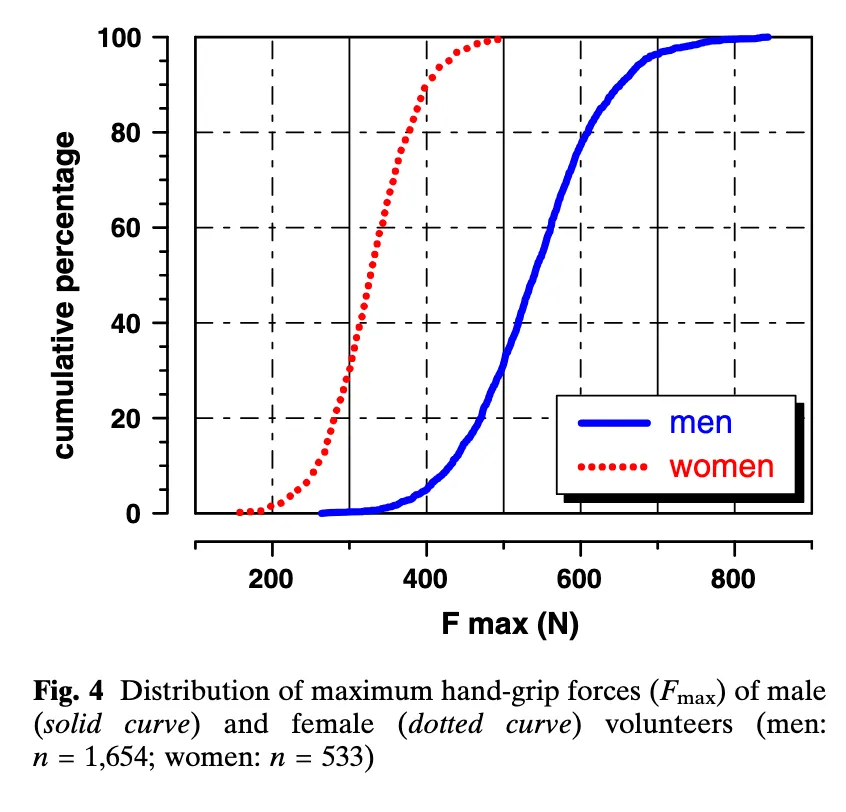

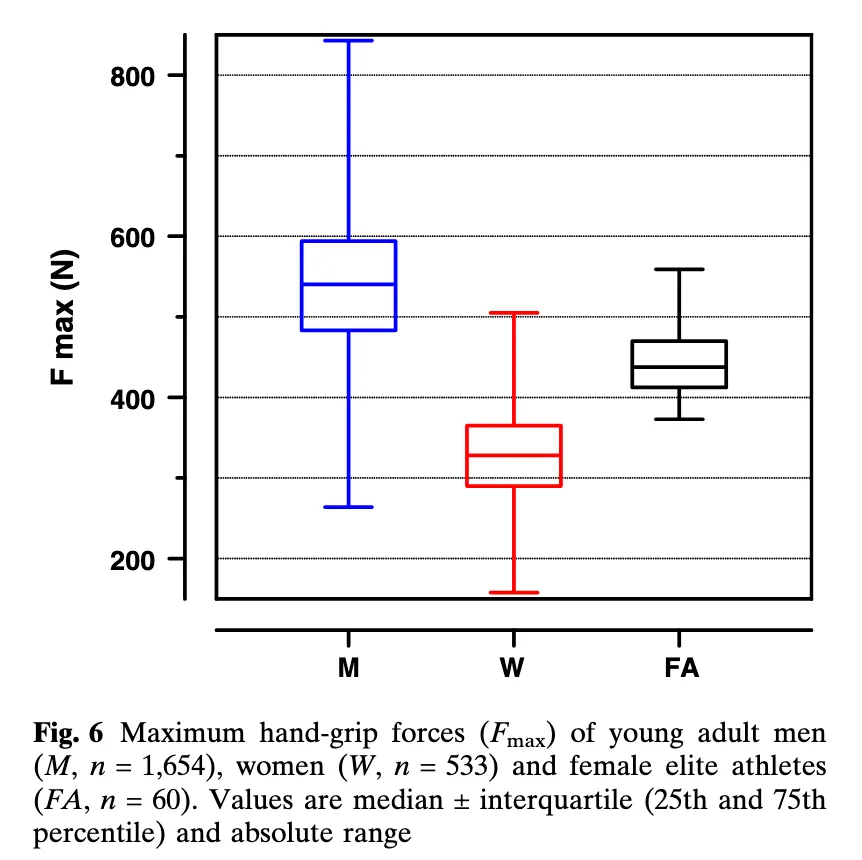

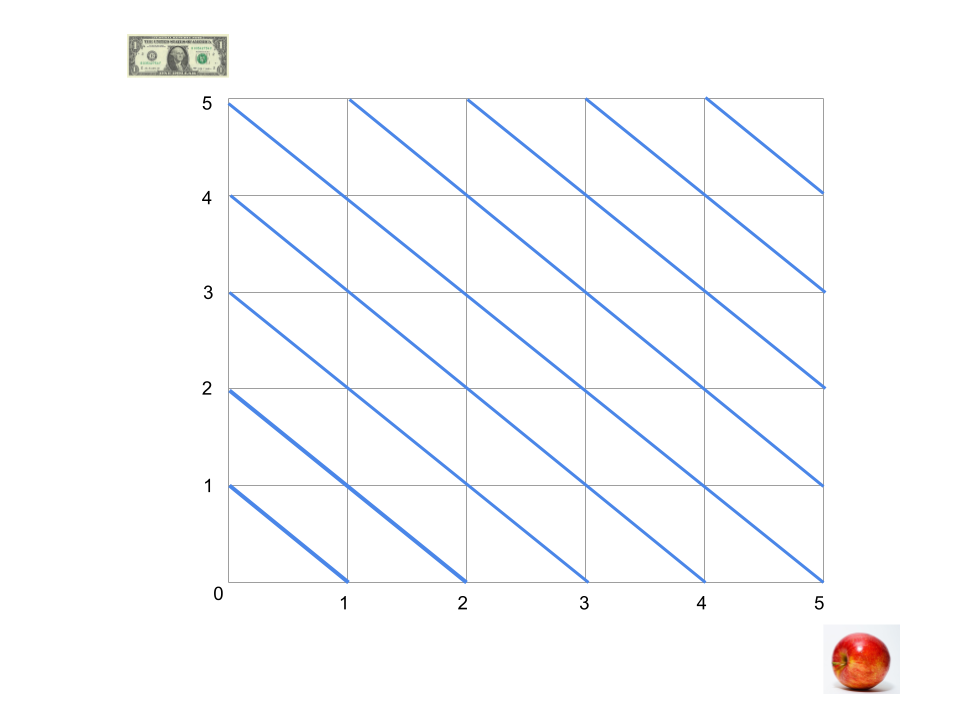

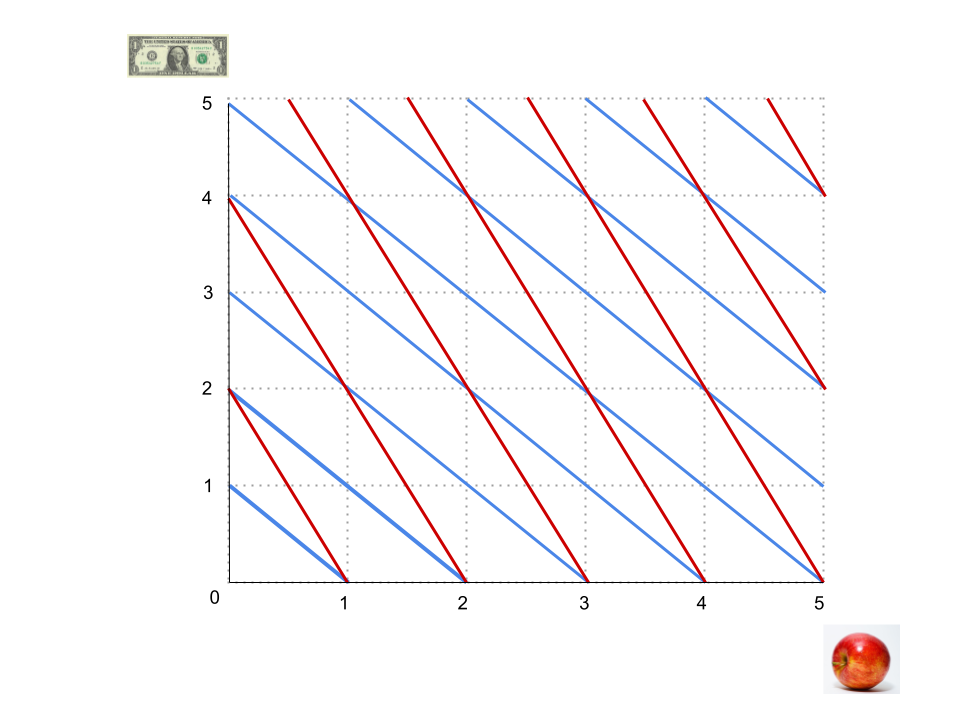

This study suggests that would be about 400N Fmax (though this would not avert most elite female athletes acquiring jam, see second figure, and the pictured participants are young adults):

The distributions are really surprisingly not-overlapping!

90% of females produced less force than 95% of males. Though female athletes were significantly stronger (444 N) than their untrained female counterparts, this value corresponded to only the 25th percentile of the male subjects.

We know that men and women have different grip strengths! We know that about half of people are women! Why do so many containers require women asking a man for help in order to open them? (Or carrying around an opening tool or living in a kitchen?)

Yes, strength required to open packaging ranges across a wide distribution, but I note that very few are impossible for anyone to open, so it seems like some effort somewhere goes into keeping them in the feasible range, and that effort does not seem to care about it being reliably feasible for people like me. I don’t imagine Bonne Maman wants to stop women getting to their jam—I imagine that nobody cares.

I thought about this most when I lived in a group house with a shared bulk stash of Gatorade, and any time my woman housemate or I wanted to drink a red one we’d have to ask a guy to open it for us. But these days I also often hurt my hands opening (or failing to open) things, and while I’m sure I’m low in the female grip strength distribution—and may also be high on the ‘unreasonable anger about anything nearby when my hands are hurt’ distribution—I don’t think it’s just a me issue, and in the moment it always feels like a ‘fuck you, raspberry jam isn’t meant for you’.

-

Numb mental state shifts

There are different mental states that feel different. Those are relatively obvious. For instance, being angry or drunk or frustrated or besotted.

Then—for me at least—there are different mental states that don’t immediately feel like anything, but where in acting I notice that my behavior is different, or different things feel easy or impossible. For example:

-

If I’m in a lot of pain or distress alone, I might feel like I couldn’t compose myself. But in fact if company shows up, it becomes natural to pull myself together.

-

If there’s a time limit, I will often find a vast well of motivation that was otherwise non-existent. The day before a deadline I will smoothly (if with a lot of effort) do ten times as much as on a normal day.

-

On some days at least, if it’s 8pm, things will become possible that I could only have dreamed of at 11am.

-

Riding my bike to the station, then taking it on the subway, then riding it again to get to a party seems like not a big deal with a friend, but like a prohibitive ordeal on my own.

Some of these are very important! For instance, in causing myself to get ten times as much work done sometimes, or to travel to places, or to not despair if things seem impossible at 11am.

But how many more shifts like this do I never notice because I don’t probe the range of relevant behavior in every possible circumstance? If different humans have similar mental architectures in this regard, can I get a list of the common ones somewhere?

-

-

Understand why AI is a doom-risk in 39 captivating minutes

I’ve really wanted more good short accounts of why AI poses an existential risk. Working on one myself has been one of those incredibly high priorities I keep putting off.

Meanwhile award-winning journalist Ben Bradford of NPR has made a podcast version of my case for AI x-risk that I am thrilled with!

(Bonus within the 39 minutes: what Hamza Chaudhry of FLI thinks we should do about it—who I was delighted to later meet as a consequence!)

If you or anyone you know could do with a quick and gripping rundown of why this is a problem, try this one.

Get it on any podcasting app here: https://pod.link/1893359212

The NPR press release has more context on the rest of the series, assessing different possible sources of doom.

-

Games that change your mind

Some things you might learn from games are pretty blatant: Trivial Pursuit might teach you trivia, MasterType might teach you about typing, Grand Theft Auto might teach you about driving or crime.

But sometimes games teach people less obvious things—things that are more experiential or ineffable, things that you didn’t know you didn’t know, concepts that stick in your mind, deep things. Here’s my list of games and their interesting real-world updates, as experienced by me or my friends:

Dominion: Don’t invest for eternity. When casually improving or protecting or investing in things, it’s easy for me to treat life (and perhaps even the present period) as basically eternal. In fact I shouldn’t, but it can take many years of living to really feel how likely it is that you’ll leave your perfectly wonderful house within two years, or just keep on aging. Dominion lets me feel that in a matter of hours, by tempting me to invest in a beautiful and effective deck that will do amazingly for the rest of eternity, then making the other player win by haphazardly buying a handful of provinces before I’m done. Which is very annoying, and I do hold against it.

**The Witness: **there is nothing in The Witness (at least near the start, I haven’t played it all) that you can pick up and take with you. No objects, no points, no manna, no health. It’s just you, walking around in a world. Something about that feels like it would be deeply unsatisfying—like what is a game, if you can’t get, y’know, things, dings? Part of me thinks that GETTING is equivalent to satisfaction, in spite of all the evidence to the contrary I keep pointing out to it. And The Witness is not where I came to realize that. What The Witness made me feel is that knowledge is a REAL thing you can GET, like an object. Not some hand-wavey second-rate bullshit thing that philosophers pretend to get off on. In The Witness, while your character walks around, impermeable to the world, you come to know more things. And knowing more things lets you go to places you couldn’t go to when you knew fewer things. The game on the computer concretely changes from you picking up knowledge, that ethereal thing in your mind. This is of course how everything is, but I suppose the absence of any other form of ‘picking up things’ in The Witness made me actually feel it.

**Minecraft: **How many of my difficulties in life are not this-life specific. How to live as a creature with different boundaries of personal-identity, e.g. the world spirit. Much more about these in my previous post, Mine-craft.

Return of the Obra Dinn: If at an event where lots of people are saying their name and what they do or something, I am usually bored and don’t expect to remember these things. Return of the Obra Dinn is a game where you have to figure out from minute clues the names and causes of death of a lot of characters. Once at a networking event, I decided to think of it as like a sequel of Return of the Obra Dinn—I could see all these people sitting around the table, and my quest was to pin a name and a deal to each of them, and this introductory section was currently showing me crucial information. I found that this was a very different mental state. So I suppose I learned that whatever I was normally doing in ‘trying to learn’ things about the other attendees, it is an extremely pale cousin of the curiosity I can feel in a different mental state, and that different mental state is actually fairly different, and naturally invoked in RotOD and not networking introductions.

**Dungeons and Dragons: **Caitlin Elizondo says DnD has given her a few concepts that make a difference to her thinking more generally. The concept of ‘will saves’ has given her more empathy for situations where someone wanted to but failed to do something. The six DnD stats helps her access the framework where there are different types of competency that are valuable for different tasks—obvious in theory, but easier to think in terms of with this structure.

**Poker: **the feeling of being ‘on tilt’

Boggle, Set, Ragnarock: the feeling of flow. Ragnarock is mine, and I would have said I’d experienced ‘flow’ elsewhere, but Ragnarock is sometimes more like an altered state than other such experiences I’ve had.

Civilization IV: I used to lose at a scenario then go back and play it again over and over changing things slightly until I won, which gave me a vivid sense of how suboptimal my native strategy is, presumably also in life. Which is obvious in theory, but it’s different to really feel how much better I would live this day if I was doing it the twentieth time with a laser focus on winning.

Games in general: the experience of addiction, sadly. I’ve always struggled to keep up habits of taking addictive substances, so I infer I’m unusually safe from chemical addictions (I used to play Civilization for five minutes as a reward if I remembered to take my amphetamines). Games are I think the thing I find most seriously addictive. Which has definite downsides, but it is certainly also an interesting experience that helps me understand the wider world better, and where I would be missing something if I just read about addiction in the abstract.

Do you have any to add?

[ETA May 1: I’m adding more I hear in the above list, and also see many good additions in the comments!]

-

11 ways to be less deferential

often worry that people are being too deferential about their beliefs. I also hear others worrying about this, and nobody seemingly worrying about the reverse, except perhaps my friends and therapists (and I guess honestly people who know cranks, so that’s a bit troubling).

Which leads me to wonder, supposing it’s true that many people are too deferential, what might people do to change it? And can I offer them useful advice, as a person who might be not deferential enough?

Tonight I talked to Joe Carlsmith about this; here are some ideas mostly from the conversation:

- A thing that has discouraged me from having independent views and broadcasting them is the concern that my views are extremely ignorant. At the normal pace of new information appearing, I am just too slow a reader to be acceptably up on it. At the AI-news rate, it’s very hopeless. And even if you recognize that situation at a high level, it can be easy to get to thinking ‘I want to write something about W, but I’ll need to read X, Y and the sequence on Z and all the responses to it first’.

It was helpful to me to give up on this kind of expectation, and accept that I’m going to be ignorant and have views anyway. I think this is the right thing to do because a) everyone is fairly ignorant and we don’t want the public discussion to be only the few people who don’t realize they are ignorant or care, and b) saying what you guess is true then letting people point you to why you are wrong is often more efficient than scouring all writing on the topic, and c) there’s value from more independent thinking on a topic, and being informed comes with being less independent. Bringing us to—

-

Being sufficiently out of the loop can actually help, as long as you are bold enough not to be silenced by this—if you don’t know what others’ views are, you have to come up with your own.

-

Focus on having your own beliefs at a relatively high level. For instance, “Shouldn’t we be stopping AI though?.. Wait, does that argument make sense?” is the kind of thing you can think about and discuss reasonably well without needing to know a lot of technical or fast-moving details, until a more manageable few are brought up in the argument. And my sense is that these kinds of questions—e.g. is our basic strategy what it should be?—are actually neglected.

-

Which brings us to status. Intellectual deference probably follows normal patterns of status-based deference. So it probably helps to be either high status or arrogant. That’s a lot of effort, but you can have the experience of being high status or arrogant by talking to people who are relatively lower status or deferential, such as children.

-

It probably helps to be brought up in a situation where you learned to distrust the thinking of everyone around you. It’s probably ideal to be taught by your parents that everyone else around is an idiot, then to come to distrust your parents opinions also.

-

That’s hard to get later in life. But perhaps you can get something similar from experiencing apparently venerable intellects confidently asserting things, then later observing them to be false.

-

If you are in conversations where it seems like the other person isn’t making sense, try to assume that is what’s going on, rather than the potentially much more salient explanation that you are a fool.

-

Give esteem to people asking potentially silly questions. It can help to expose yourself to impressive people who do this.

Niels Bohr quotes are helpful (HT Wikiquote)

-

Refuse to ‘understand’ things unless they are very clear. I don’t really know how to do this, because I don’t know what the alternative is like—being steadfastly confused about things seems to come naturally to me and I don’t know how else to be, but maybe you have both affordances available here and could lean one way or the other.

-

Something something do real thinking versus fake thinking. Ironically, this point I am deferring on, because I haven’t finished listening to Joe’s (so far very interesting) post.

-

If you are going to pass on information that you don’t deeply understand, track that it is a different thing, for instance by saying ‘something something…’

-

Self driving interview

In honor of yesterday’s nonspecific point in the gradual arrival of self-driving cars, an interview with myself.

Interviewer: It sounds like you’re pretty excited about self-driving cars. Weren’t you just saying that unemployment from AI is on some kind of very overlapping continuum with extinction from AI? Isn’t rooting for self-driving cars rooting for AI unemployment here, and thus extinction?

Katja: Hmm. Well first I should say, I’m actually fairly neutral on unemployment in general from technology. If technology makes it overall easier to produce what we want, but empowers some people over others, that change in power might be a downside (or not), but if so, it’s one I’m inclined to solve with direct redistribution rather than having the people who would be disempowered do unnecessary busywork to ‘earn’ their living.

Interviewer: Ok, so you think AI unemployment is different?

Katja: Yes, because it involves the disempowerment of humans in general in favor of non-people entities whose empowerment has a decent chance of spelling our ruin. Doing things the hard way to avoid that happening isn’t busywork, it’s very valuable. I don’t usually want to take sides between different humans systematically—society seems probably best served by letting the most effective production methods win out in most cases. But sometimes there are entities who produce things efficiently, and you still shouldn’t trade with them because it empowers them. It’s a lot like not trading with Nazis (broadly—I’m not saying AI entities are evil in the same way, just that their empowerment has a good chance of leading to genocide or omnicide).

Interviewer: Ok, but aren’t self-driving cars AI?

Katja: Yes, but the class of entities I don’t want to empower isn’t ‘AI’ really—it’s more like ‘AI agents’. Though also, the processes that are creating them, such as LLM companies, which complicates things. Self-driving cars are narrow and not much like entities that can be empowered. And my understanding is that we could have perfectly great self driving without using risky AI. But maybe I should be opposed—I’m not sure how to think about what class of entities I should not want empowered.

Interviewer: Are you just in love with self driving cars because they would be so personally convenient for you?

Katja: That is probably playing a role. A car with a random stranger in it is just so much less what I want most of the time than a car by myself. I like to imagine that Uber was invented like, “New startup idea: Chatroulette but you’re stuck in a moving vehicle with the person!” I would feel worse about the end of driving as a human profession if I felt like human drivers consistently did the job acceptably well. But the rate of drivers around here seeming chemically impaired or choosing to drive on the kerb of the freeway to get around other cars, etc, and also talking to me when I don’t want to talk, means there are a lot of cases where I would like to go somewhere in a car except it seems to awful so I don’t.

Interviewer: So how was the self driving car last night?

Katja: non-existent. Once I arrived at the airport, the Waymo app informed me that I wasn’t allowed to get cars there, because they are rolling it out slowly or something. I considered trying from one transit stop outside the airport, but since the available Waymo map was a very uninformative cartoon of the Bay Area and it was after midnight, that felt risky.

Interviewer: Did you give up?

Katja: Not immediately. I had also been told that people are taking ‘robotaxis’ all over, so I looked that up. I couldn’t immediately figure out what it was by Googling and looking on the Android app store, so I messaged some friends, and they directed me to an app called ‘RoboTaxi’ purportedly from Tesla but with barely readable and amateurish font and 57 reviews. As is often perplexingly the case with things of importance to a lot of people from very well known brands, I felt like I was exploring an obscure frontier that nobody had tried to use before. (You want to do what?? Get a ride in one of our cars?? And you want to do it through an app?? And you want to know where they are available??) I logged in and it told me the airport was also out of bounds. So then I gave up.

Interviewer: How did that make you feel?

Katja: ashamed

Interviewer: That makes sense. Why did you even so brazenly think you would probably be able to get a self-driving car from the airport?

Katja: well locally, because Waymo had a map indicating that the airport was within their zone, and I figured Tesla wouldn’t have such a can’t-do attitude. But more fundamentally, I guess I haven’t properly internalized how opposed airports are to efficient travel. Seeking a human-driven car after all this, I was reminded further because the location of the rideshare pickup at the airport and the signage indicating the location, both seem like they should probably be crimes.

Interviewer: Might it be an even broader problem with your level of techno-optimism? Weren’t you just the other day very disappointed by a futuristic kettle? Perhaps you need to learn that everything is shit?

Katja: maybe, but I don’t know, sometimes technology really changes things. I remember before Uber, when I just had to phone a person at a taxi company and ask them to come and collect me and then wait for an unknown period of time, and worst case give up and walk home.

Interviewer: How was your ride home last night?

Katja: Pretty good. The Lyft driver didn’t perceive my initial desire not to talk, and so we had a detailed discussion of Yemen, his life as an immigrant, his family, arranged marriage, romance in Islam, experiences running different businesses, the nature of business partnership, AI risk, and other drivers’ views on automation. We exchanged details so we could interact again.

Interviewer: Do you really think your quest to instead drive home in sterility wasn’t completely misguided?

Katja: Humans are great, but you have to be allowed to want solitude sometimes. It follows that you should probably be allowed to want solitude while also getting to another location. That said, I probably want solitude unhealthily much, and underrate the loss of human connection from these innovations. Maybe there should be a tax or something.

-

San Francisco: self driving

I’m on a plane heading back to San Francisco. I’ve lived in the Bay Area for most of the years since 2009, and a large fraction of that time the place has felt near the brink of self-driving cars. (Well, everywhere has, but San Francisco feels like the first testing ground for the most interesting experiments in technology.) And that has felt like a big deal. So I kind of expected them to arrive with a good amount of ceremony.

In my own life at least, their actual arrival has been gradual and underwhelming. At some point I learned that you could call Waymos to drive you around a subset of the city of San Francisco. This was wild and exciting, but not actually very useful, since getting anywhere in San Francisco seemed to require traversing a greater subset of it than that, plus I nearly always want to also drive to or from Berkeley. I took Waymos a couple of times, and one of them might have even had transport value.

In the last few days, multiple people not from the Bay Area have casually assumed that I take self-driving taxis all the time, which caused me to investigate and learn that Waymo is now all the way up and down the West Bay Area, seemingly including the airport. So tonight when my plane lands I hope to finally get half way home without a driver! It’s not clear this will improve my experience, since I will then have to catch a Lyft in downtown San Francisco, and probably explain to them that I have all this luggage because I took a self driving car as far as I possibly could before resorting to a human driver. But I am still excited.

-

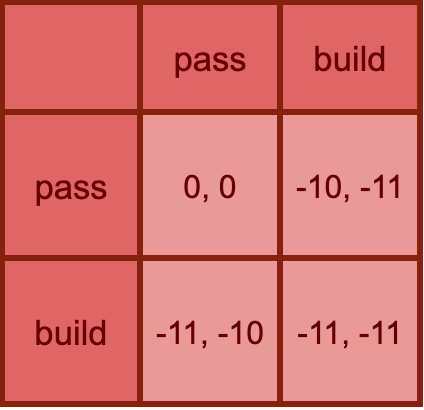

AI unemployment and AI extinction are often the same

My sense is that people think of AI existential risk and AI unemployment as distinct issues.

Some people are extremely concerned about extinction and perhaps even indifferent to total unemployment. Some people think of moderate AI unemployment as a realistic and concerning issue, and AI extinction as science fiction.

I think of AI unemployment and AI extinction risk as basically the same issue, and in likely scenarios, happening together.

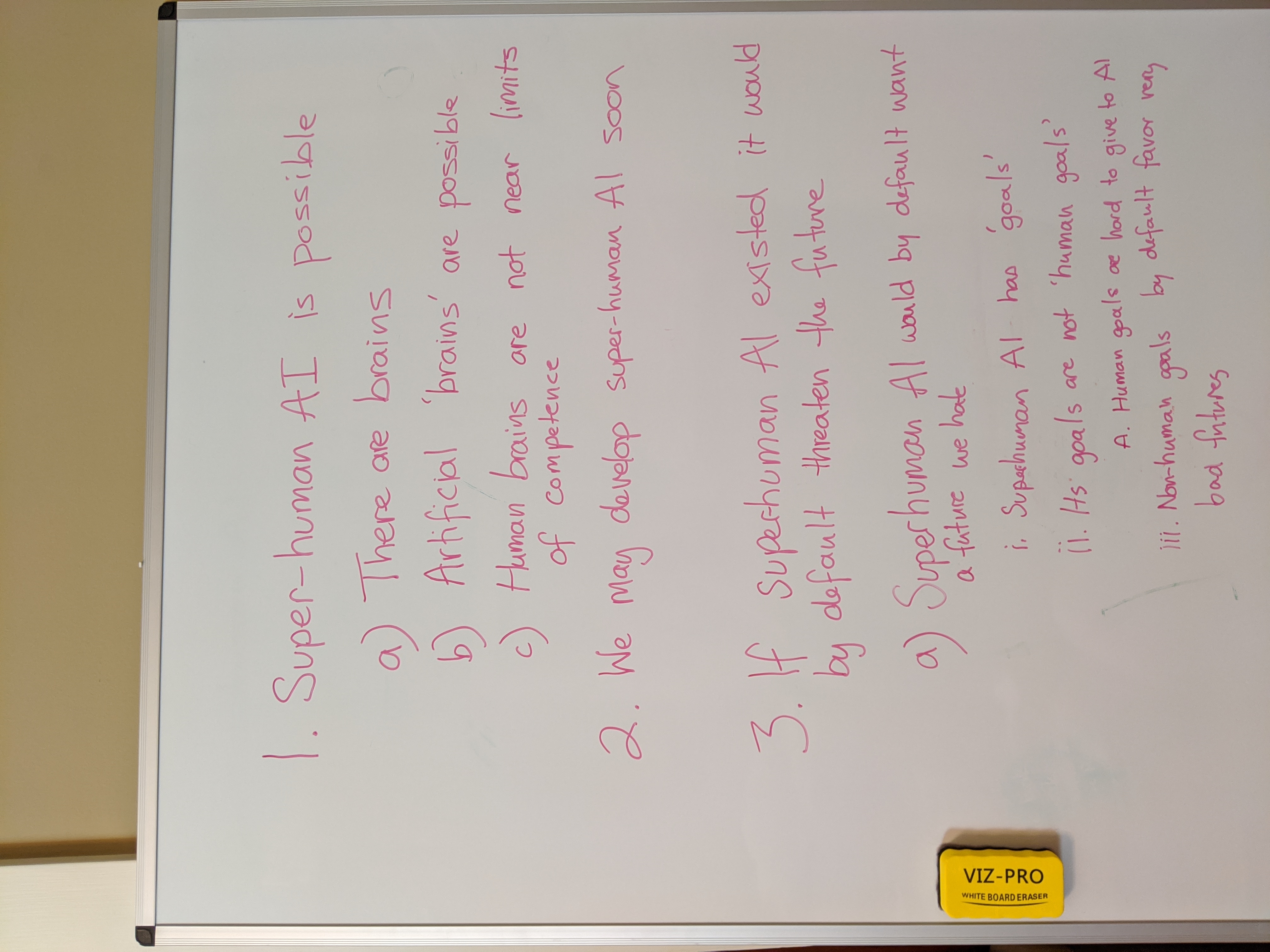

At a very high level, I’d say the argument for human extinction from advanced AI is something like this:

We’re going to make AI that can do everything better than humans

We’re going to make that AI into agents that navigate the world independently and do what they want

We are not going to make those AI agents want the right things

The basic issue is that in the presence of more capable agents with different goals, humans are less able to get resources and influence, and direct them toward the humans’ goals.

One way ‘losing power to more competent agents’ could look is that a surpassingly smart AI agent intentionally eradicates humanity. But killing everyone and controlling the world is a pretty wild corner case of ‘using your competence to control the situation toward your own preferences’. In particular, it has never been seen before, though the new AI situation might make it possible.

The traditional ways that humans make use of competence to influence the world include earning salaries then spending the money on things they want, earning investment income, making and using alliances, persuasion, taking political actions, etc.

If no ultrapowerful AI appears and exterminates us, I think we have every reason to expect ruin from AI sapping our power and resources by these more traditional methods. Outcompeting us as labor, outcompeting us as informed capital holders, outclassing us at political strategy and persuasion, and controlling the conversation.

It’s true that if humans were only excluded from the employment path to resources or influence, this would merely be an excruciating upheaval on a massive scale, and probably not herald extinction.

But unemployment here is just the most legible tip of a sprawling shitberg. It’s just not plausible that humans are unemployable, but they are doing well at political strategy and persuasive communication. Unemployment goes with losing power across the board, except insofar as power is granted by whoever has it by virtue of might. That is, insofar as AI cares about empowering us.

So unemployment could happen without extinction (if we successfully built AI that cared about us in the right way) and extinction could happen without unemployment (e.g. if an extremely competent AI system decides to exterminate us). But in a lot of cases they not only coincide, but are the same issue.

Asking if someone is more concerned about unemployment or extinction is like asking if someone primarily wears a seatbelt when driving to avoid having their body flung through the windscreen, or to avoid dying.

If powerful AI agents have their own agendas, those agendas will win out. They might win out in one crushing step, or win out in a trillion small familiar ways.

-

Manhattan: distance and movement

Last Tuesday I went to a Broadway show, Ragtime. I was in the front row, but surprised by how much the action did not feel real and a few feet away from me. Perhaps the performers were so skilled they didn’t seem like real people, or the sound so loud and sharp that it didn’t feel like people legit singing just over there. We seemed to have a proper chance of getting spit on us, yet I felt as if I was in a separate world. The biggest break in the feeling of vague unreality was when one of the actors on my side of the stage made piercing eye contact with me for a second or so. Which felt very close and warm and human, and I was kind of thrown by that too, though I liked it.

The next day I saw Jonathan Groff perform at the start of a TIME 100 event. He did feel like another human right there in the room, but still somehow surprisingly distant. His song didn’t touch me, though my previous experience delighting in his presence on YouTube led me to have other expectations. And his song was Sondheim, which should also support other expectations. Toward the end he said something about—I think—his work being about connection with us all and being together, and that explicit thought reached me better than anything in the music.

Having enjoyed the first Broadway show of my life the day before, and still being in New York, that evening we went to see another one: Every Brilliant Thing. It was a one-man show currently starring Daniel Radcliffe, and involved a lot of Daniel running on and off the stage and borrowing objects and asking audience members to briefly represent his high school teacher or vet or crush. It was also quite poignant. So if any performance was going to feel like a real person was in the same room as me, and like that person was reaching me emotionally, this would seem to have a good shot. And Daniel did seem like a real person over there. But I recognized the movingness of the story more than actually being moved.

The play was about a long list of things in life that are ‘brilliant’, which I hear as both ‘great’ and as ‘brightening’. The list made me feel uneasy, because I recognized the things as good, but I didn’t feel it. Ice cream, sure. The smell of an old book, okay. Bed, yeah I guess I like it quite a lot more than not-bed. This was all somewhat fitting with the themes of the play, in which the brilliance of things was recognized to different degrees by different characters at different times and levels of depression. But it still made me feel improper and distant from other people: the audience was meant to understand these things as brilliant. I cried a little about that afterwards.

But it also reminded me later of an obvious-but-hard-to-enact thought: if you don’t feel the goodness of things much at some point, it’s not an indication that everything has gone wrong, or that the world is no longer good, or never was, or that there is something badly wrong with you. This is clear in writing, but if I don’t explicitly consider the issue, it is easy to interpret such experiences of nothing seeming good as some variant of ‘nothing is good’. Which is about as correct as having a numb foot and describing the situation as ‘there is no floor’. The picture in my mind is of a sailor in darkness: sometimes you can’t see the stars, and then you have to navigate by memory. And the darkness is about your current location, not the world—it’s possible to get to other non-dark places, even if you can’t see them from here.

One of the last brilliant things involved the sound of a record crackling into the start of a song, which I was surprised to learn that I did feel something about. Perhaps because it reminds me of being alone, in a nice library, with pen and paper. That kind of state quite close to the sublime! Which reminds me that things can be sublime.

These forays into group emotion left me feeling somewhat like a distant and unmoved observer, but an earlier example is also interesting. In our first hours in town, we rushed out to see a live comedy show. It was in a crowded underground bar, and we were in flip-out seats in a walkway. I was struck by how much even the emcee made us laugh—they were funny, but I bet we laughed more than watching the most celebrated comedians on a screen. And it seemed like a fuller laughing experience—laughing with group, together. I hadn’t really thought of comedy as much better live. I wonder if in-person social orchestration is a big part of making people laugh, beyond the performance-as-separable-pixels-and-sounds. For this show at least, I was easily brought along with the emotions intended, and felt closer.

Is it less vulnerable to go along with comedic emotions than be moved by serious ones? And with a crowd I feel self-conscious? I wonder if my relationship with the other people in the room matters more than the performer.

Sitting outside a restaurant later, watching people and cars pass by, I thought about how sometimes everything seems brilliant to me, and remembering that made everything seem a bit brilliant.

-

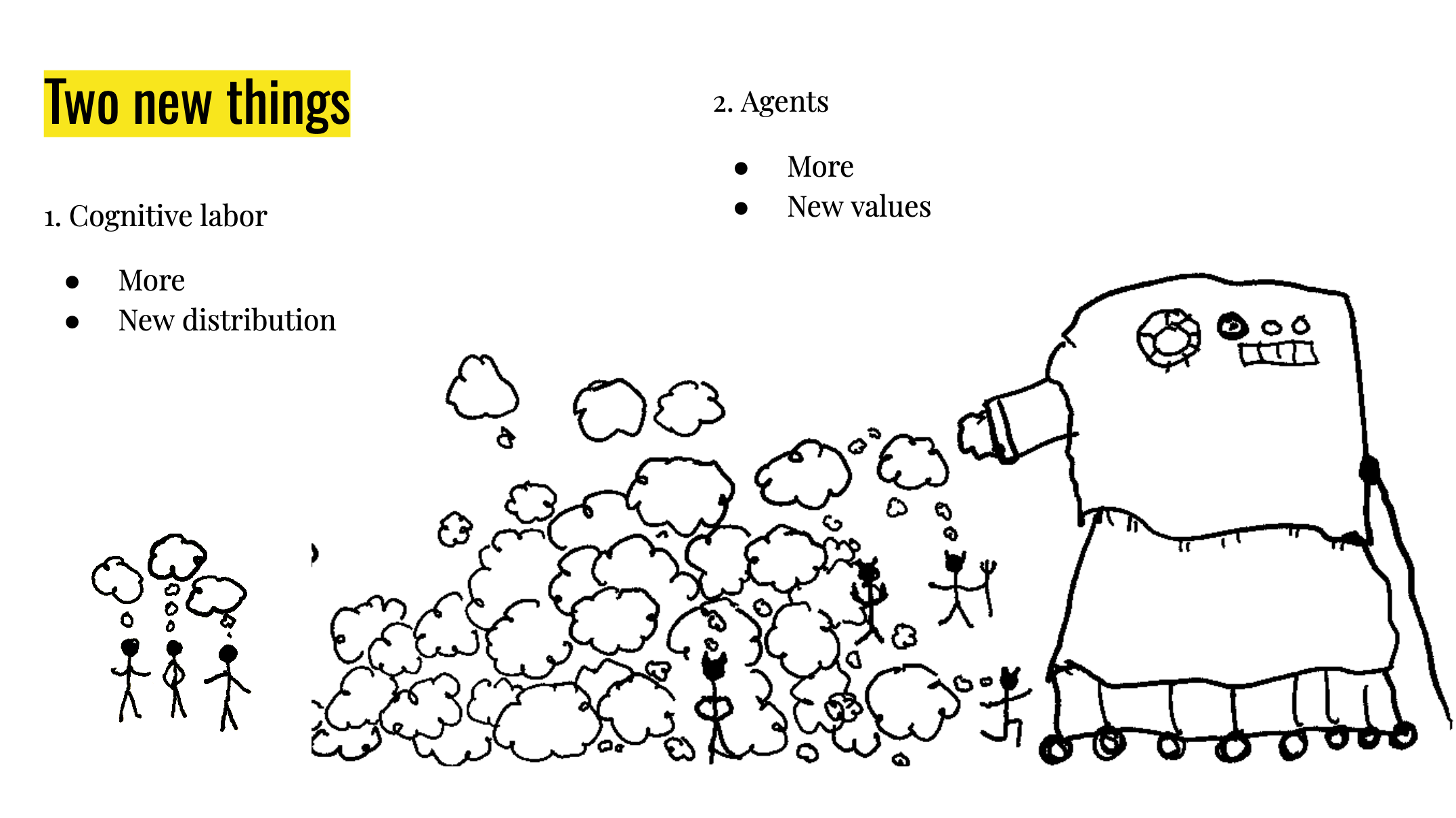

AI: cognitive labor glut + new guys

Why is the advent of AI a big deal, and more worrying than previous advents?

I think there are actually two interesting things going on, that make AI importantly different to previous technologies.

I. Industrializing the cognitive labor supply

I.a. Scale: the incoming ocean of new cognitive labor

Until lately, useful cognitive labor has basically come from human brains. This has made it slow to scale up, and expensive to use.

AI is the industrialization of cognitive labor.

Soon we will have very much more cognitive labor available, and much cheaper.

Since cognitive labor is a kind of resource that humans use heavily for everything—from navigating coffee tables to navigating corporate takeovers—this will probably be revolutionary. It will be like that time we moved from picking plants we could find to growing them intentionally. We have been picking thoughts we could find, growing wild in human minds, and now we are firing up the thought factories.

Increasing cognitive labor isn’t new—we have increased the human population, and we have constantly been increasing effective cognitive labor by augmenting human brains with concepts and tools. But this is all slow and clumsy. And increasing the population increases demand for cognitive labor as well as supply. Augmenting human brains retains a heavy bottleneck on human brains. Maybe nothing here is conceptually new, but quantitative differences matter. On the current path, we will soon be inundated with non-human cognitive labor. (We are already starting to get quite damp.)

I.b. Distribution: who controls the cognitive labor?

Until lately, because cognitive labor is intimately connected to human brains, it has been relatively evenly distributed. Every person has a birthright of one brain’s worth of thinking. They regularly have to sell a good chunk of it to live, and a CEO of a large company might indirectly control the output of a million times as much. Which might seem like a big discrepancy. But the fact that people usually keep a fraction of their cognitive labor for themselves (if only because it’s hard to sell it all), and that cognitive labor retained by laborers makes up a large chunk of cognitive labor in the world seems potentially important. People use their own cognitive labor partly to think about their political interests and learn about the world critically and negotiate and act on their own behalf to a non-negligible degree, which will be harder if there is a vastly larger amount of cognitive labor stacked against them in each of these areas. It’s hard at the moment to figure out what’s going on and what to do about it, with everyday people pitted against professional political spin among other forces. It will be worse if the forces of manipulation have access to piles of cognitive labor that normal people have no chance against.

II. New guys

Cognitive labor doesn’t just float around on its own—when it comes from human minds, each bit is controlled by a specific person who has their own goals and interests and goes around in the world acting to forward these. In jargon-land I might call this an ‘agent’, but here I’m going to call it a (gender neutral) ‘guy’. Cognitive labor can be produced in a non-guy format: various tools effectively think, but probably don’t have their own agenda, don’t understand the world richly enough to act in it, can’t act independently.

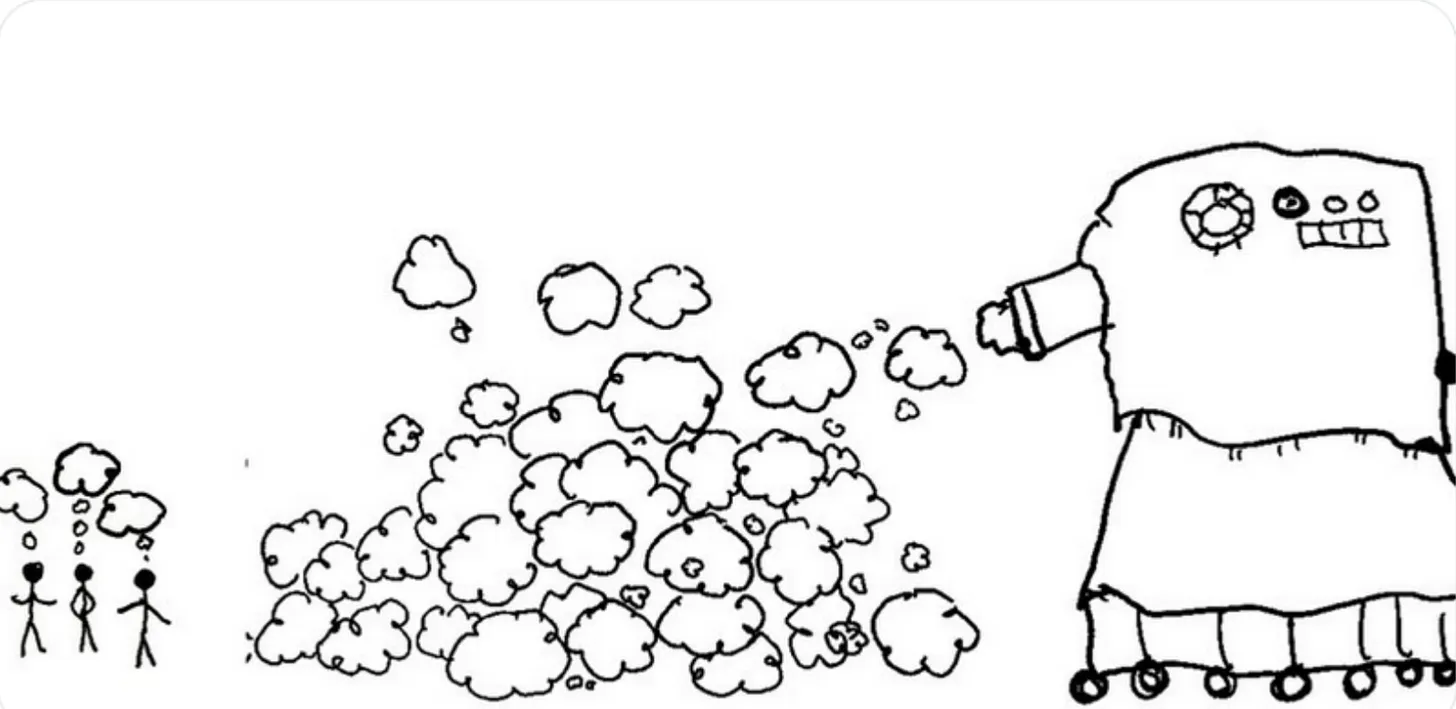

However with AI, it looks like we are going to make more guys. We see people scrambling to turn AI into guy-format as ‘AI agents’, and this makes a lot of sense theoretically: AI guys are a lot more economically valuable than a bundle of tools that need to be wielded by a human guy.

II.a. Scale: The incoming horde of new guys

Until now, the only way to make new guys was via human procreation (at least strategically relevant guys—dogs are a kind of guy, but one commanding so little cognitive labor that they do not change the situation much for humans). This means the number of guys in the world can’t change extremely rapidly.

The number of guys in a particular place or society can change rapidly, and historically a large influx of new guys entering your society has been an issue of great concern.

AI will mean new guys can be spun up trivially, and since there are all kinds of uses for them, we can expect a massive explosion of guys like we have never before seen (for strategically relevant guys).

II.b. Character: the new guys are alien and have unknown values

Until now, any new relevant guys created were human, and therefore had a lot in common with other humans. For the advanced AI we are set to create on the current path, we do not know how its mind works very well or what its values are, or how different they are from human values. We don’t know what they would do with the world, if they had freedom to do it without humans disempowering them if displeased.

These two things, and four sub-things, would each be a big deal on their own, but in my opinion probably would not constitute an extinction risk. An ocean of new cognitive labor on its own seems actively great. A more unequal distribution of cognitive labor seems bad, but not fatal. Getting all of these changes at once is very unfortunate: the new ocean of cognitive labor, newly able to be distributed very unequally, seems likely to mostly fall in the hands of the new guys, at the same time as the new guys do not have values that are conducive to a long future of human flourishing, or any other particular thing we wish for in future.

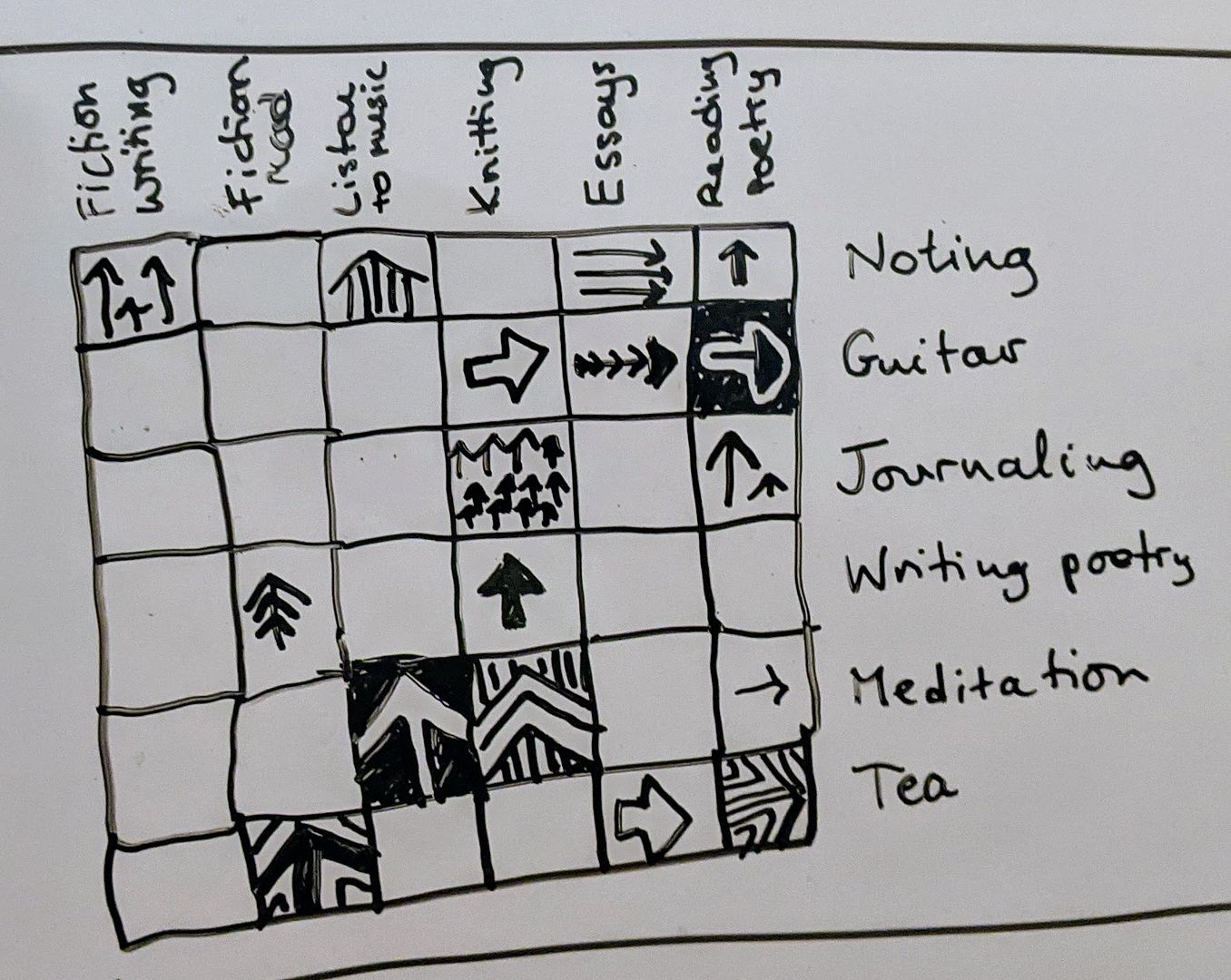

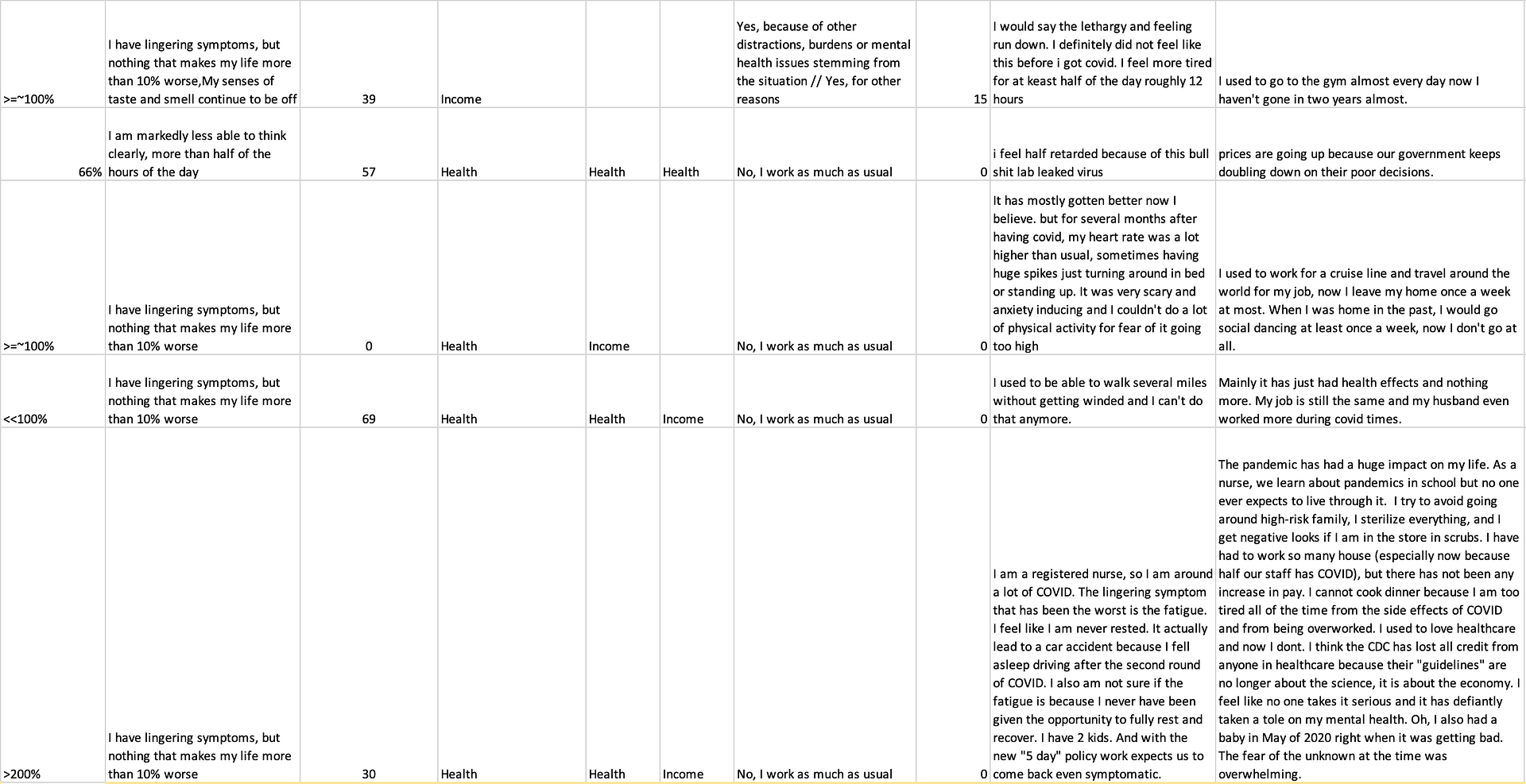

(Pictures by me, from my 2023 EAG talk, where I also covered these thoughts.)

-

Cambridge: the kettle

I arrived in Cambridge, Massachusetts, today with my boyfriend. We have a modest Airbnb apartment, up enough stairs that if you decided to count the flights you would probably have forgotten about the project by the top. It’s pleasant and unassuming, and we were moving slowly toward beginning writing our mandatory blog posts rather too late in the evening when a new presence got our attention.

Feeling dehydrated and migrainish at the end of a day of travel, I wanted to make tea. I quickly found a promising looking plug-in kettle among the minimalist counter apparatus and plugged it in. It was voluminous, smooth, emanating modern perfection, broadcasting with its textureless heft and electric glass interface, “have you lived a life of unnecessary want, guessing if water is remotely the right temperature after you pressed a single primitive button some time ago and then forgot about it? Understand now that everything is truly simple! You have been wronged, misled. The past has been corrupt, but it is over. We have reached the time of professional water heating. Every person can have buttons for every desirable temperature, effortless buttons of light, giving you the simple information you deserve on a luxurious but reasonable lit up interface”. The kettle was even clean. I’m not sure I’ve ever seen that before. It should have been a warning.

I put in water and closed the lid, and was presented with eight lit-up buttons and a big ‘69°F’. I pressed the ‘boil’ button. That made the °F number change and flash, but then it returned and this did not seem to cause anything else that suggested water heating. I pressed the power button. I couldn’t tell if that turned it on or off or neither. I pressed the boil button again. I decided to microwave my water and get on with my life.

I microwaved my water, and we did something else for a bit.

Afterwards however, the kettle was still there. It looked so tantalizingly proficient. I wanted it to boil water. I wanted sleek, efficient boiled water. Water that made you feel like not having water at any well-labeled temperature you wanted whenever you wanted was some kind of thing you had almost forgotten about from your childhood in a developing country. Also, the kettle only had about three kinds of buttons, how hard could it be to find the pattern that made it boil water?

I pressed the boil button and the power button more. I pressed the ‘warm’ button and the ‘green’ button. Sometimes when I pressed a button, most of the other button lights went out. Sometimes things flashed. I tried different orders of buttons. I tried long-pressing buttons. I tried opening and closing it, taking in off and on its stand. Sometimes the water temperature moved to a promising 70, but then just meandered back to 69 again.

My boyfriend suggested that it was broken. Which was very plausible. But it was so responsive that I couldn’t really believe it: it just didn’t have a ‘broken’ vibe. It had a vibe that it was extremely effective and easy and perhaps I was broken.

I’m pretty good at paralyzing electronic devices. It’s as if iPhones have been going about their lives mindlessly doing iPhone stuff until they meet me and suddenly everything is strange and different and they feel self-conscious can’t remember how to receive text input. Once I merely opened the box of a new laptop and it died, so that one at least can’t be imputed to my poor security or tab-management lifestyle. (I hope this trait bodes well at least for contributing to an AI pause one day.) So even though I had tried pretty hard to make this kettle work, and I’m an intelligent person capable of many kinds of puzzles, I kind of believed that my boyfriend would not have this problem.

So I asked him to help, and while he did apparently share the opinion that he would be able to figure it out near-instantaneously, he was not very interested in prioritizing this. So I played at trying to goad him into it: probably he couldn’t fix it, he was bluffing, I was going to look it up online and he would lose his chance to prove himself. He apparently didn’t need to prove himself.

Somehow though he did become interested shortly after, possibly just out of compassion. He went to the kettle and started pressing buttons. I went to watch. Possibly that broke the magic: he just did the kinds of things that I could think of: press the small number of buttons in different orders and for different durations. It didn’t work. So the situation was just as bad, except now he too really wanted the kettle to work.

Even though this was a very compelling puzzle, I moved to do the reasonable thing and look up the instructions. In bed with a mostly-legible photo of the numbers and words under the kettle, I Googled. The kettle didn’t seem to exist much. Like, one of the most promising links was something that suggested the right kettle was being sold on a shoppping site in Botswana, where I was greeted with a popup asking if I wouldn’t rather have the Brazilian version of the site, before being directed to a generic message that the item I requested is not available.

I found a kettle on Amazon that looked suspiciously similar but with a different brand name. Some buyer videos failed to clarify exactly what to do with the buttons. I found a YouTube video from someone purporting to love the kettle, in which he seemed to just press the ‘boil’ button to boil the water, but where the video also cut briefly right there, so who knows? (Why did he cut it? Is there some secret?)

In further search results I found a Reddit post “Is this a good kettle for beginners”, which struck me as both an absurd question, and a question to which “no” was an absurd answer and also clearly the correct one here.

I wonder how much the reason technology often disappoints me is that I have too many hopes for it. I love efficiency and systems improvements that pay off forever, and if a kettle appears and whispers to me that it understands that everything could be better, that it too is on the side of progress, that of course things can be simple and good, then I believe it fully until the moment it betrays me, when I am SHOCKED. Perhaps the world is mostly cynics who never expected their smartphones to lay down the letters they wanted, or prevent them from being woken by spam callers throughout the night, who would have just rolled their eyes at this kettle.

We still haven’t made the kettle work. My boyfriend says he now really thinks it’s broken. He listened to it the old fashioned way, and what it is saying is ‘wee-uhh-wee-uhh-ee-ungk’.

-

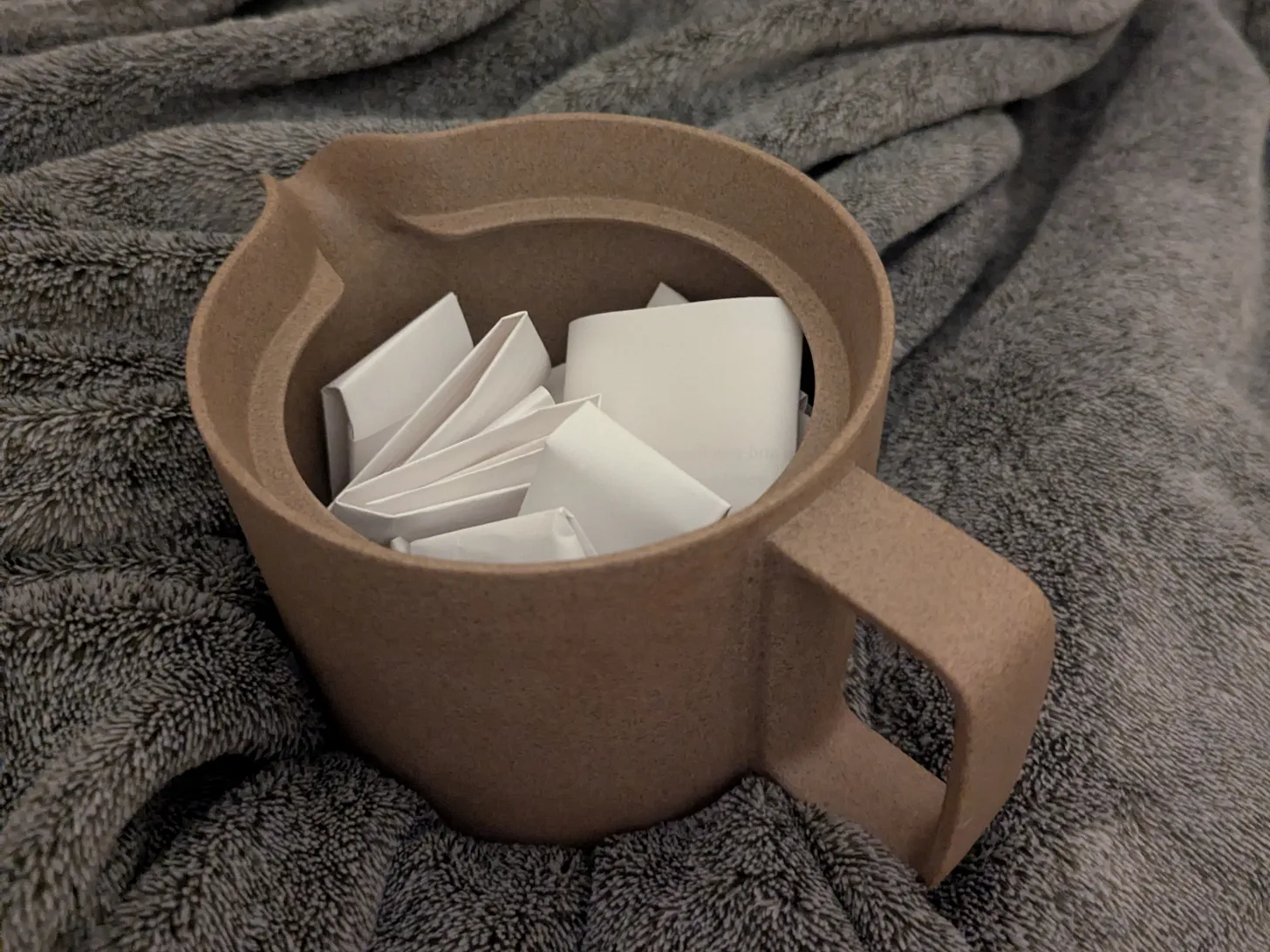

Sleep puzzle system

Here’s an obscure life hack.

If (like me) you:

Don’t like going to bed, due to it conflicting with keeping on doing things

When in bed, wish you had something more engaging and enjoyable to do instead of just lying there waiting—for instance, playing a very compelling computer game until you get tired

Do not in fact reliably get tired from playing a very compelling computer game

Like puzzles

..then a solution that has worked surprisingly well for me before (and I wrote about previously) is having hard math puzzles handy to think about as I go to sleep. Somehow thinking about math at the bounds of my limited ability to imagine does make me sleepy. And is also quite compelling.

I haven’t done this for a while, I think due to disorganization and losing track of the puzzles I had, and not having a great source of new ones. But I have recently reinstated this in a nice format.

I think ideally you want this to involve no preparation at all at bed time, and no going on your computer. So you want a nice supply of the right kind of puzzles in a physical place near your bed. So I sorted through puzzles and found a bunch of good-looking ones, printed them out, cut them up, folded them into pleasing parcels, and put them in my ex-housemate’s ex-teapot. Now if I’m ready for bed, I can draw a tiny package, and open something actually fun and helpful to think about in bed.

-

How much should the ideal person cry wolf?

It is a fact about wolves and rationality that you should warn people about wolves quite a few times for every effective wolf attack.

In particular, there is an asymmetry between the costs of having one’s flock devoured and averting a non-eventuating wolf attack. If the carnage is a hundred times worse, then it’s worth up to ninety-nine false alarms to stop it.

The original fable was about a boy who would continually lie about wolves, and that is definitely poor form.

But in modern parlance, ‘crying wolf’ seems to be used for just being openly alarmed about things that turn out ok—I don’t hear much implication of deceit.

And in modern sensibilities, being seen to ‘cry wolf’—by even once raising an alarm that isn’t consummated with disaster—is something people seem to really fear. I think multiple people have asked me about whether AI safety people might have ‘cried wolf’ about some earlier GPT model. I’m not aware of anyone doing that, but the idea that they might have is so tantalizing that it bears investigating. Because if even a a few people somewhere did, it would be such a nice embarrassing blow to AI safety people.

And I probably responded in the tempting way: jumping to assure that I don’t recall hearing any such fears from these quarters. But I think that worsens public thought norms by implicitly buying into the unspoken premise that it would be quite shameful and naive to have raised even one warning.

And so relatedly, probably people who see real risks from AI are scared to voice them, lest they be seen to ‘cry wolf’ and tank the credit of the movement for the next round of dangers. Because it is taken for granted that one should only get one chance to raise an alarm. That the first warning must be for the most undeniably big, bad, real wolf.

This is not the wolf lookout system we want.

‘Warnings’ are usually about fairly bad events, and therefore they tend to be worth making when the probability of those events is still low. This creates a real difficulty for society in adjusting people’s credit when the low probability events they have warned of do not come to pass. Most of the time, if the person is right, the events still shouldn’t happen! The person wasn’t saying they were likely! Yet you don’t want to let the alarmist off the hook, with plausible deniability for arbitrarily many alarms.

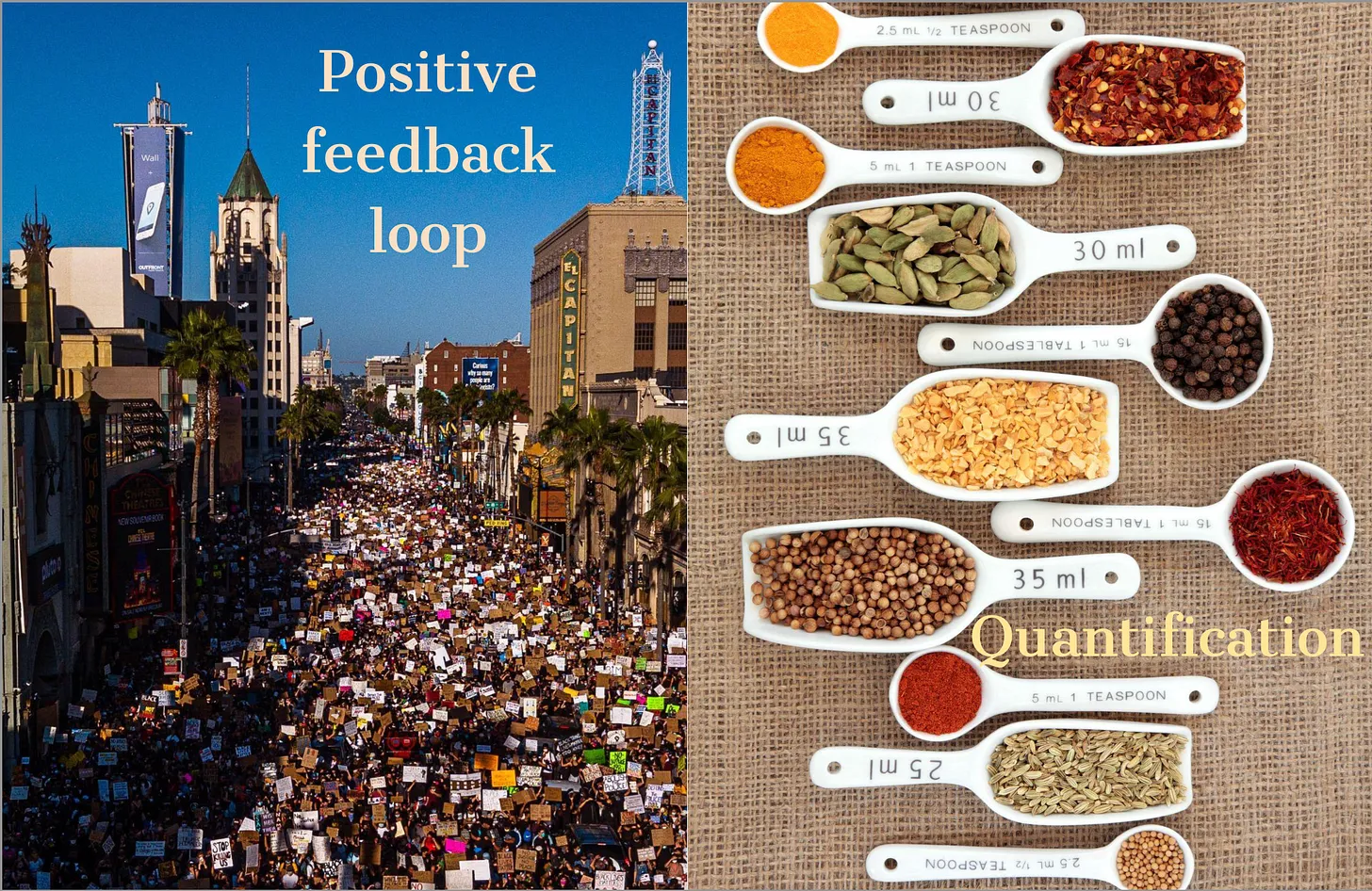

I think the solution to this difficulty should look much more quantitative, like collecting rich track records of the predictions made by a person or a movement, and scoring them well. The present solution of childishly denouncing any unmet danger is insane.

And meanwhile if there are bad risks that have a low chance of appearing on every warning, we should still warn of them, and not be too much cowed by innumerate customs.

-

Vibe signaling externalities and the people-to-places pipeline

People are sending signals all the time, and those signals are to my knowledge usually about themselves: they are smart, or kind, or attractive, or not naive, or have their shit together, or care about Palestine, or care about you, or are friendly, or artsy, or professional, or relatively in the know about the cultural currents of TikTok or DC.

People are also taking in signals all the time, and these signals are often about other people, and often even closely related to the signals being intentionally sent: Alice is trying to seem friendly, and Bob perceives her as friendly. But also a lot of signals people take in are about places. People read places as safe or dangerous, lighthearted or depressing, silly or serious, asking them to know more, or get more power, or do more. Suggesting they laugh drunkenly under the moonlight, or get up at 5 and pray. Encouraging submission or rebellion.

These signals that make the world feel one way or another make a big difference to people. They make one neighborhood nice to live in and another feel off, one workplace energizing and another deflating. But they are—to my knowledge—almost entirely unintentional side effects of the ways people behave for other reasons. People don’t dress nicely to collaborate in making you feel like you are in a thriving part of town. They dress nicely to make someone think something about them. And someone probably does, but then the signal is left there for everyone else to sweep into their average perception of the vibe in this part of town.

A lot of ways people behave that affect the vibe are probably not intended as signaling at all—for instance, perhaps I grow roses in my front garden because I love roses, and it nonetheless affects people’s read of the vibe. Or perhaps I keep piles of scrap metal there because I want them for something, and that has a different effect.

But an interesting dynamic to me is that a lot of efforts are going into sending signals about people, and those signals are being read as messages about places. Because places can’t send their own signals, but vibes are a very big part of how people experience places, and place vibes are heavily influenced by people’s attempts to paint themselves as one thing or another.

People try to look not-to-be-messed-with and strangers read the street as dangerous. People try to look generative and strangers read the neighborhood as wealthy enough to have time for this. People try to look rich and people read the area as safe. People try to look beautiful and people read the scene as shallow. People try to look smart, and people read the office as unwelcoming.

In sum I posit that there are massive externalities in vibes, and especially in the vibes of places, and there is a particular path of causality from signaling about people to unintentional signals about places.

(I’m not very confident about all this—I was just thinking about it this evening, arriving in and mildly exploring New York City. I think there’s a lot to be said about organizations’ roles in this that I haven’t gone into—for instance in a bar or restaurant or stand up comedy club, people are trying directly to make you experience a vibe. These are small places where the vibe of the place has been mostly internalized—someone owns it.)

-

AI risk was not invested by AI CEOs to hype their companies

I hear that many people believe that the idea of advanced AI threatening human existence was invented by AI CEOs to hype their products. I’ve even been condescendingly informed of this, as if I am the one at risk of naively accepting AI companies’ preferred narratives.

If you are reading this, you are probably familiar enough with the decades-old AI safety community to know this isn’t true. But I don’t have a good direct way to reach the people who could use this information, and still I hate to leave such a falsehood uncontested. So if this is obvious, I hope the post is still perhaps useful to point more distant and confused people toward.

~

I personally know that AI risk was not invented by the tech CEOs because I have been near the middle of it since at least 2009—before any of the prominent AI companies existed, let alone had CEOs who might be trying to hype their products.

Here’s are some miscellaneous events over the years to give you a sense of the implausibility of this:

2008 - I attempt to contact Eliezer Yudkowsky to inform him that I am ‘trying to figure out the optimal way to use my life’ and would like to hear a better account of why his plan (of worrying about AI risk) is good. I have read about it online, but would like a clearer account. Traveling the world shortly after undergrad later, I meet a handful of people in person in the Bay Area who care about this, and one argues strongly that I should prioritize AI risk over my previously preferred causes e.g. climate change. I decide to think about this.

2009 - I am still not very convinced that AI is the most important thing to work on, but go to stay with the people who are worried about it for a few months. I argue about it a lot with a handful of them. There seem to be about twenty of them locally in the South Bay, though many more who comment on the relevant blogs. My photography collection from this era is quite sparse.

I go to The Singularity Summit for my first time (and its fourth), which is very lively and full of people who are thinking seriously about the future of AI.

2010 - Deepmind is founded. (I am back at school.)

2011 - I start a philosophy PhD at CMU, hoping to be eligible to work at somewhere like the Future of Humanity Institute one day, which is a happening hub of discussion about existential risk, AI and other important issues, that I like to visit.

2012 - I visit the Bay more and hang out with the growing AI risk community there. I visit the UK and do the same. I go to the AGI 2012 Winter Intelligence Conference.

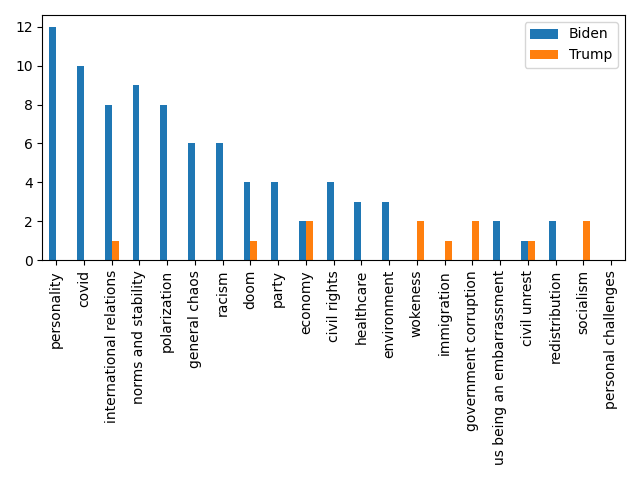

2013 - I move to Berkeley and work at MIRI for a semester during grad school. I measure algorithmic progress over time across various computer science domains, as input to expectations for artificial intelligence in future. I visit the UK and attend the Center for Effective Altruism’s ‘weekend away’ where we have a debate on which cause is best, between global poverty, animal welfare and extinction risk. Extinction risk wins—the crowd leaves having changed their mind in that direction on net. The three advocates just before or after:

2014 - I join MIRI properly. I research The Asilomar Conference and Leó Szilárd as evidence about whether it is worth people trying to deal with risks early, because people around mostly believe that the risks from AI are at least a decade away, and there is disagreement about whether that makes it futile. I run an online reading group about Superintelligence, a new book about AI risk. I co-found AI Impacts, a project to answer questions about the future of AI, because AI risk seems at least fairly plausibly the most important thing to work on, and I want to investigate more and share my thinking with others.

2015 - I attend the first FLI conference—it seems that more people and more prominent people are interested in AI safety! OpenAI is founded.

2016 - I lead a team to run the first Expert Survey on Progress in AI. The median probability given to an outcome of advanced AI that is “Extremely Bad (e.g., human extinction)” is already 5%.

2017 - Some people around me are getting very worried, and saying AGI will happen within several years. My survey gets a shocking amount of media attention, becoming the ‘16th most discussed paper’ in 2017 according to Altmetric. Apparently there is interest in this topic..

2018 - I go to a big workshop for people working on AI risk in the English countryside, and a Chilean summit where I talk on TV and the radio about AI risk. It feels like interest is still picking up, and I feel optimistic about talking to the public.

2019 - GPT-2 comes out. Someone tries to get it to name our house. My favorite names include things like “World peace: tigers and humans” and “rooftop hillside: the highest place in the world”. It is hilarious and useless, but also magical and wild. The things we have worried about for years are feeling more tangible, and people’s ‘AI timelines’ are shrinking.

2020 - The world is reminded that really crazy things can happen. AI Impacts becomes remote. I spend the year with my household, who are almost all working on AI risk. We enjoy whiteboards a lot and run at least one good house conference in this period.

2021 - Anthropic is founded

-

The salad market mystery

It often happens that I desire kale, but I want it to be clean and cut up, and while shops do sell this product by the bucketload, they are actually only willing to sell it by the bucketload. As a normal-sized person wanting a one-off salad, rather than a family of nine celebrating a kale festival, the market seems very uninterested in my existence.

‘Just put it in the fridge and eat it over the coming week, this isn’t a big deal’ I hear someone say. But I already have several plotlines going on in my life. I don’t want an additional kale arc that I need to track to resolution. I don’t want to commit. I just want a no-strings-attached salad that I can consume and walk away from.

‘Just throw out the rest of the kale’, I hear somebody say. But I don’t like throwing out mounds of delicious food that were elaborately grown and brought to me. This might be a moral failing, but so it is—‘salad + perfectly good kale destruction’ is a much less delicious prospect.

The same situation holds for other greens. I love parsley, but I generally want a fistful, not a promise of parsley for the foreseeable future. Basil becomes black and bad if you don’t eat it for too long, but basically the only way to get some basil is to invest in that outcome.

Why can’t I buy greens in convenient units? I’m not the only person who often eats alone, or doesn’t like throwing out food. My dislike of owning a pile of mildly decaying greens and feeling obliged to eat them is stronger than most, but surely not that rare. Greens don’t last well. I would have thought ‘one meal’s worth’ would be the most likely quantity of greens to want, but instead there is no apparent market for that (at least where I am, in California).

What is going on?

My current best theory: kale is pretty cheap, so a lot of the cost of providing it is in non-kale components, such as packaging and people putting putting it out on shelves. This means if you sold a single serve of kale, it would cost a disproportionate fraction of the price of five serves of kale. And most people, even if they did just want one serving of kale, would feel unjustified paying a much higher per-weight price for that, and so buy the mound of kale anyway and hope to figure out what to do with it. This might be a false economy—if they are like me and enacting that hope takes attention or is improbable—or not.

I love home-made salad, and probably eat much less of it than I would for this kind of reason, so the question of why I can’t buy convenient scale greens often crosses my mind, and I welcome better answers (both to why the market is like this, and the question of how to eat delicious salad now and then anyway).

Image by beauty_of_nature from Pixabay

-

Athletic education vs. athletic torment

I don’t know if it occurred to me until my thirties to think of exercise as an enjoyable thing. I was familiar with finding obscure corner-cases that were fun, such as Dance Dance Revolution. But the idea of it just often being a good time was alien.

I hesitate to blame anyone for anything, but school seems culpable here. I got the impression so firmly that PE class (‘physical education’ or ‘physed’) was a kind of horror, I’m not sure I would have treated this fact as on less solid ground than ‘you’re supposed to put a methods section in your lab report’. It just seemed like the way of things.

For children like me, anyway. Some children liked PE, but those were a totally different kind of creature. What the PE telos included was being awful for nerdy children, maybe to punish them for not being jocklike children. Since nerd children generally like not being jocks, they do not take this punishment as any serious feedback on their way of being, and just nobly withstand it. This is the way it is meant to be, and so it goes for every nerd until they break happily free and never play sports again. So was my rough picture of the situation, if I recall.

So this seems to mark a failure on the part of the schools: couldn’t they have at least got the message to me that this wasn’t the intended narrative, if it wasn’t?

But also, looking at the teaching methods, they don’t seem clearly distinguishable from what one might do if trying to traumatize non-athletic children:

Take all the children, with varying pre-existing levels of athletic skill and mortification about their bodies, and get them to change into different and skimpier outfits in front of each other.

Put them in ‘teams’. This ensures that when one of them does badly at the task, they are letting down half of their classmates (people whose social training so far consists largely of surviving and enacting schoolyard social dynamics, so their handling of this is unlikely to be ideally empathetic and graceful.)

Make multiple teams, so while half the class is disappointed by a child’s failure, the other half has a positive stake in it and may cheer the child on contemptuously. This might help teach the child to distrust positivity directed toward them, and respond to praise with stress and doubt rather than accepting it naively.

Traipse the group around outside shouting things and giving some people roles and authorities. Don’t clearly explain anything so that the whole class can hear it. Make reference to things that nerds won’t remember from previous sports classes.

Pick a task that is a) physically embarrassing to attempt in front of people if you are unathletic or overweight, b) at a level of difficulty that the least athletic classmates will find impossible and the most athletic will make look extremely easy, and c) allows for a chunk of the class to watch in real time and common knowledge as people fail

Do the activity for a vaguely disclosed period of time. If the nerds seem discouraged or confused, shout things they won’t understand.

Repeat at regular intervals.

(Not all of these elements were true in my own education, and probably few all of the time, but I think they are all recognizable.)

I’m guessing from the athletic child standpoint, this is a perfectly reasonable way to be taught that exercise is fun, and the teachers are mostly focused on maintaining that success rather than mitigating the losses with the children who are never going to excel there anyway. But I feel like they could have done so much better, especially if the purpose is in fact to educate the bulk of the students about physical activity, rather than for instance to scout out the student who might make it in professional sports. If it’s not, I think something like that would be cool to have in schools: a class that teaches all students about how to get exercise well and enjoyably.

-

Missing markets in executive function

It’s early in the morning, and sadly 1:29pm. After spending some time looking at things and picking them up and walking up the stairs and down the stairs and considering questions like “what should I…”, which my brain apparently considered objects of art more than of imperative, I inched into a decision to go out somewhere. Perhaps it would be clearer there.

After a blur of climbing and descending stairs and seeking objects and forgetting what I was doing and appreciating how beautiful my bag is, I set out. After remembering I should take various medications and going back inside to do that, I set out.

Often my favorite cafe seems too far away, at about four blocks, but today I had wandered half way there while I considered my options, so I decided to go. It’s a German place that feels homely and wholesome to me in its unamericanness. I too-carefully contemplated different places to sit, and chose outside: today a sunny explosion of roses and umbrellas with words like ‘Reissdorf kölsch’.

I stared at the menu until the waitress had asked me a couple of different questions she hoped would open a conversation about ordering. I tried to go along, but digressed into the pronunciation of ‘Spätzle’ to give myself longer to think. I nearly forgot to order coffee. I slopped my coffee on floor on the way outside, which the waitress offered to clean up. She brought me my food outside just as I was deciding to move all my objects to a different table, at which moment I slopped much more coffee all over my computer.

My computer was closed, but she seemed concerned by this, and perhaps concerned about me in general. She had already told me where to get silverware and napkins, but she went and got them for me anyway, which was nice because otherwise I was maybe just going to not eat things for fifteen minutes until I became fully conscious that that was why I wasn’t eating.

I’m not usually like this, but sometimes I am, and it’s hard to put a finger on what the difference is, except to point at behaviors such as ‘how long will I inexplicably stare at my arm? If I go to buy a drink, what is the chance I will lose it?’ My understanding is that this kind of thing is called ‘executive function’ and that I don’t have heaps of it at the best of times, but much less at the worst of times.

This restaurant was providing me with a certain amount of executive function alongside afternoon breakfast, just out of kindness and obligation. But what if I could recognize the need, and intentionally buy it? Just go to a place that specialized in that, where they wouldn’t only make sure I order eventually and get my utensils and clean up after me, but actively take charge on causing me to get my shit together and do something in the day?

I was reminded of an idea I had before (from ‘10 things society might try having if it only contained variants of me’):

Shopfronts where you can go and someone else figures out what you want. And you aren’t expected to be friendly or coherent about it. Like, if you are shopping, and yet not having fun, you go there and they figure out that you are the wrong temperature, don’t have enough blood sugar, are taking too serious an attitude to shopping, need ten minutes away from your companions, and should probably buy a pencil skirt. So they get you a smoothie and some comedy and a quiet place to sit down by yourself for a bit, and then send you off to the correct store.

I had thought of the value-add there as ‘figure out what you want’, but I think part of what I was imagining is that they take charge and keep the process happening and ensure that decisions are made and blood sugar is acquired for instance. Instead of the thought of blood sugar leading to staring into space or being reminded of a different idea to do with blood sugar that you want to write down but you can’t figure out where to write because there are too many tabs in your computer and you think you should close them but first you want to record the idea..

You can buy executive function in some formats—for instance, I recently hired a Chief of Staff. But what if for instance you just want to buy a little bit of executive function sometimes, on demand? Like on the occasional morning when you are failing particularly hard at being a coherent agent, or when you are stressed or in pain and failing to figure out what to do about the stress or pain because you are stressed or in pain? Are these things that only happen to me? (Humorous ADHD YouTube suggests no.)

In my vision for this kind of service, it might live in the category of ‘way to treat yourself’, like getting a manicure (which—for those who haven’t done that—often involves more hand massage and offers of champagne than it might if treated as a more pragmatic nail improvement chore). Instead of just sitting in your living room considering stuff you should maybe do, you can sit in a comfy chair in a nice smelling place petting a cute puppy while someone charming and encouraging talks to you, figures out how you should proceed, and prompts you to do it in easy and compelling pieces.

-

Tax offenses

I paid my taxes this evening. I was disappointed to lose thousands of dollars, but I’d say this was emotionally overshadowed by my disappointment at the web interfaces I had to navigate to lose it.

Which is partly because I’m lucky enough to not be personally much harmed by the former, but also: it’s one thing to reallocate a chunk of every second person in the country’s money, and another thing to burn a chunk of each of their time. The former serves good purposes, if debatably well; the latter serves nobody and feels like such a pointless waste.

To be clear, I’m not so much upset about my own twenty minutes—if I imagine even a fraction of Americans find it as unintuitive as I do, the scale of the destruction feels breathtaking. How many other eyes have peered at these words and numbers today? Could whoever made these pages not have considered what it would be like for another person who is not at least a casual tax hobbyist to use them? Couldn’t they have tried it out a few times on other people? (Am I being unfair, and this is a hard problem somehow?)

I realize actually sending money to the government is the last tiny step in an obscenely wasteful annual cremation of time. That is of course even more awful, but the last step struck me in particular because it feels so avoidable—changing the whole tax system may be tricky, but improving the payment pages feels plausibly solvable by a single person.

Is the harm really breathtaking though? Let us calculate extremely roughly:

How many personal tax returns are filed in the US? It looks like very roughly 160M per year lately (e.g. here, here)

Apparently very roughly a quarter of their filers owe money, rather than getting a refund

Let’s just compare the current system to a good payment interface, rather than to, for instance, the government just remembering your bank details from year to year and charging you the amount that they know you owe. I guess a smooth interface would take very roughly ten minutes less.

Let’s conservatively guess that only a tenth of people find this difficult (the rest just know to ignore the first couple of pages of text and that ‘run of the mill tax return’ means ‘Apply payment to Form 1040 - Income Tax’ for the reason of ‘Balance Due or Payment Plan/Installment Agreement’ and not for instance ‘Estimated Tax’ or ‘Extension’ even if they are filing an extension)

So we have 160 million tax returns filed * 25% having to send money * 10 minutes * 10% having trouble = ~40 million minutes

Is that a lot? Well, it’s 76 years, which is coincidentally the male life expectancy in the US. Taxation may or may not be theft, but its practical realization appears to be distributed manslaughter. Is someone held accountable for things like this?

Image by krystianwin from Pixabay

-

Unsickness celebration

When I’ve been sick for a bit, here are some things that may be true:

I haven’t exercised lately

I have developed a vague background sense that I’m fragile and if I were to exercise it should be by walking around the garden or something

I’m dressed in what is too shlubby to even count as comfortable

I am otherwise behind on some basic life things that take effort and are normally subsidized by social incentives, e.g. showering, putting tissues in the bin, eating things that aren’t power crunch bars

I am habitually avoiding other people

I am habitually avoiding places where other people go

I am habitually treating myself as a contamination threat

I’ve been lying around in bed quite a bit

I posit that a problem with this is that these things are somewhat self reinforcing, so the period of indisposition can insidiously take hold and last substantially longer than the sickness. Feeling unfit, poorly dressed, fragile, gross and contaminatory make various things less appealing, such as visiting a gym, seeing other people, going to the office, leaving one’s room at all, leaving one’s bed at all, embarking on ambitious tidying up ventures.

Worse, it is fairly ambiguous when one stops being sick, so there may not be a moment where it’s very clear you should change your behavior, especially if you continue to feel vaguely bad from these depression-flavored lifestyle factors.

Last time I was sick for a while I was in a fairly good mood during the sickness itself, but was relatively depressed for maybe a month afterwards, which I suspect is related to this kind of thing.

I was sick last week, and seemed to be better on Saturday. To avoid this kind of problem this time, I had an idea: an official end to sickness ritual, where you abruptly do all the things a non-sick person would do, and reset your expectations about yourself.

This was is my tentative ritual plan:

exercise: run to the gym and do 15m+ intense exercise

groom: shower, shave, apply substances to body, dress nice

share air: go to a cafe, get a manicure

Share more (optional): share a drink, share a kiss

These are designed to often hit multiple factors (share a drink: saliva, socializing, unhealthy behavior that can self-signal non-fragility!)

I wrote this draft up to about here, then set out to try it out. I started out in bed, with a bit of a headache, my mouth tasting like powercrunch bar and time. Going to the gym seemed like not what to do. I intended to report back.

A first obstacle with this plan was that it was actually like 6:30pm by the time I finished writing down this idea, and it turns out that gyms, cafes and nail salons near me mostly consider that past their bedtime.

I’m a member of three different gyms near me though, and one of them was still open for half an hour, so I briefly emotionally reckoned with the fact that I really had to go to the gym RIGHT NOW if I was going to make this work, then got a move on.

I put on some shoes and went out, intending to run there. It was excitingly rainy, and the cars seemed to have a shared sense that politeness to pedestrians is a kind of luxury nobody can afford in this weather. Happily the run was short. The gym is actually a climbing gym with a bit of gym equipment in the back, a tiny bit of it cardio-directed, exactly one machine of which was not taken. So I jumped on and started cycling, without sparing moments to figure out how to adjust it to my height or anything. I think I flipped between being present, thinking thoughts like “okay that’s three minutes, I only have to do that four more times…” and playing a game on my phone. It was okay. I did it, just as the gym was closing. Check.